1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

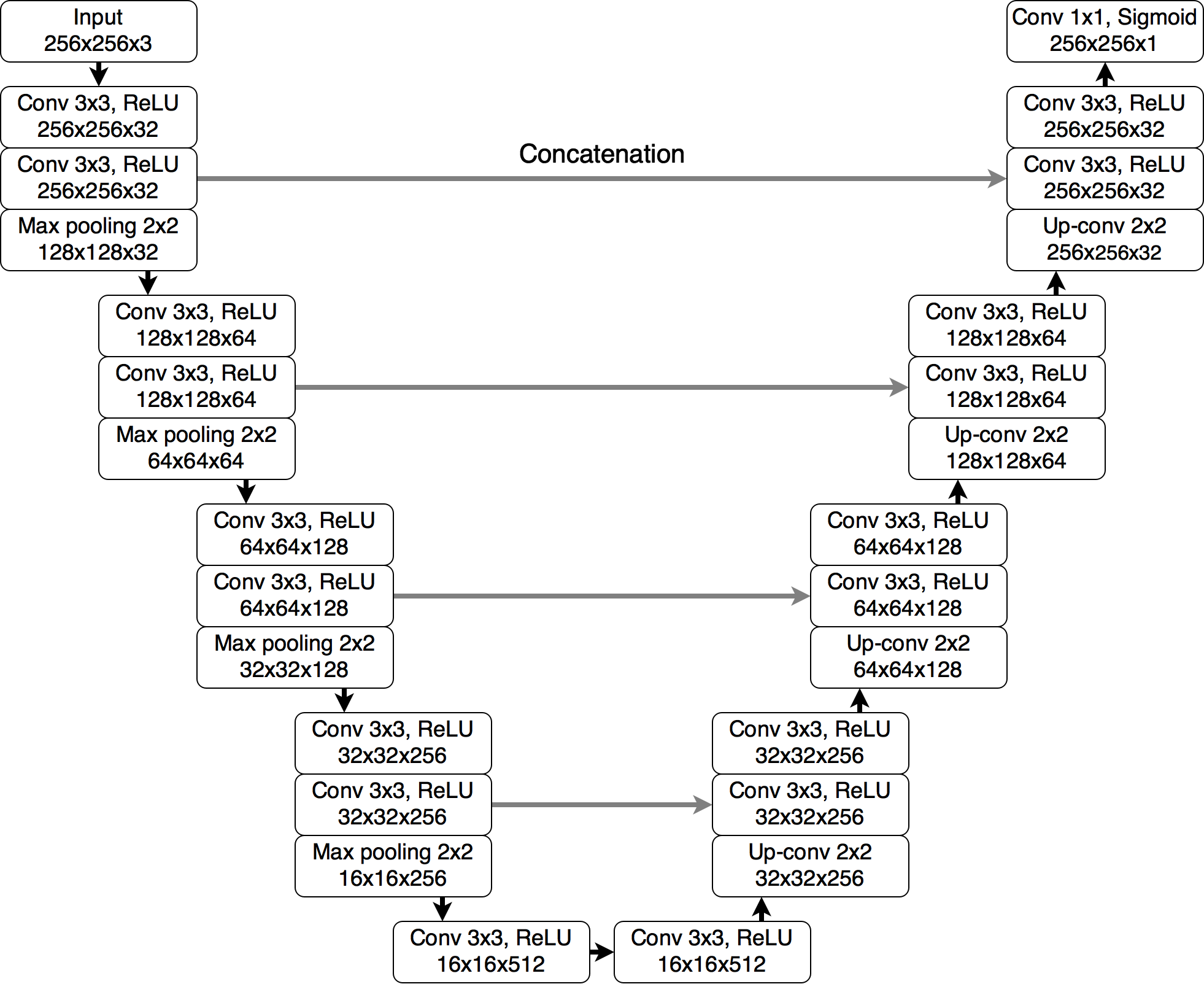

| import torch

import torch.nn as nn

class UNet(nn.Module):

def __init__(self, in_channels, out_channels):

super(UNet, self).__init__()

def down_conv_block(in_channels, out_channels):

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1),

nn.ReLU(inplace=True),

nn.Conv2d(out_channels, out_channels, kernel_size=3, padding=1),

nn.ReLU(inplace=True)

)

def up_conv_block(in_channels, out_channels):

return nn.ConvTranspose2d(in_channels, out_channels, kernel_size=2, stride=2)

self.pool = nn.MaxPool2d(kernel_size=2)

self.encoder1 = down_conv_block(in_channels, 32)

self.encoder2 = down_conv_block(32, 64)

self.encoder3 = down_conv_block(64, 128)

self.encoder4 = down_conv_block(128, 256)

self.bottleneck = down_conv_block(256, 512)

self.upconv4 = up_conv_block(512, 256)

self.decoder4 = down_conv_block(512, 256)

self.upconv3 = up_conv_block(256, 128)

self.decoder3 = down_conv_block(256, 128)

self.upconv2 = up_conv_block(128, 64)

self.decoder2 = down_conv_block(128, 64)

self.upconv1 = up_conv_block(64, 32)

self.decoder1 = down_conv_block(64, 32)

self.output = nn.Conv2d(32, out_channels, kernel_size=1)

def forward(self, x):

enc1 = self.encoder1(x)

enc2 = self.encoder2(self.pool(enc1))

enc3 = self.encoder3(self.pool(enc2))

enc4 = self.encoder4(self.pool(enc3))

bottleneck = self.bottleneck(self.pool(enc4))

dec4 = self.upconv4(bottleneck)

dec4 = torch.cat((dec4, enc4), dim=1)

dec4 = self.decoder4(dec4)

dec3 = self.upconv3(dec4)

dec3 = torch.cat((dec3, enc3), dim=1)

dec3 = self.decoder3(dec3)

dec2 = self.upconv2(dec3)

dec2 = torch.cat((dec2, enc2), dim=1)

dec2 = self.decoder2(dec2)

dec1 = self.upconv1(dec2)

dec1 = torch.cat((dec1, enc1), dim=1)

dec1 = self.decoder1(dec1)

return self.output(dec1)

model = UNet(in_channels=3, out_channels=1)

input_tensor = torch.randn(1, 3, 256, 256)

output_tensor = model(input_tensor)

print(output_tensor.shape)

|